Algorithms can be used to power artificial intelligence, but data should be learned from them, and to begin with, it should be interpreted, classified, and organised. This first-rank process is referred to as data annotation, and it is the key to every successful AI system.

Annotation of data is often underestimated as an art and a science. It must be precise technically, processes must be clearly defined, and tools must be scalable, but the human judgment, contextual knowledge, and a sense of nuances are needed. Properly done, it forms the basis of accurate, reliable and intelligent AI systems.

What Is Data Annotation?

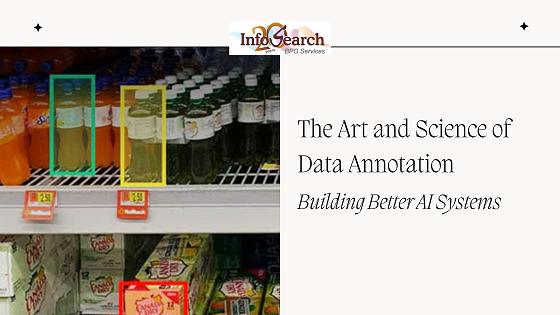

Data annotation is defined as labeling data in the manner of making machine learning models identify patterns and make predictions. It is applicable in numerous types of data such as:

- Images (Object detection, segmentation)

- Video (Following, activity detection)

- Text (Sentiment analysis, entity recognition)

- Audio (Speech transcription, emotion detection)

- Sensor (LiDAR point clouds of self-driving vehicles) data.

Annotated information is the teacher that can inform AI models throughout the training. The better the model works the clearer and more precise the labels.

The Science: Structure, Consistency and Scale.

The scientific perspective of data annotation is constructed on the systematic procedures of data accuracy and repeatability.

Clear Guidelines

Each project of annotation has elaborate guidance. These are what to label, the manner in which labels are done and what to do with edge cases. Clarity decreases the level of ambiguity and enhances consistency among annotators.

Quality Control

Multi-level quality assurance — peer review, consensus scoring, and automated validation, etc. — are used to maintain high accuracy. Even huge datasets may introduce latent errors, even with large datasets, unless there is organised quality assurance which undermines model performance.

Scalable Workflows

Artificial intelligence demands gigantically large amounts of data. The processes of scientific annotation are based on platforms, task routing systems, and automation tools to enable the scaling of labeling processes with efficient efficiency and quality.

This is what makes annotation a science because it is structured and repeatable.

The Art: Art and Context: Judgment in Art.

Automation does not eliminate the role of human intelligence in the process of annotation. Language, images and real-life situations are commonly rich with complexity that computers alone can hardly comprehend.

Understanding Context

One picture may give many different stories depending on the situation. Is it a man who is running to keep fit or to escape? Is this a sarcastic or a genuine comment? Human annotators are able to add cultural awareness and situational knowledge that AI does not have.

Handling Ambiguity

Not every data is easy to put into the categories. Where there are uncertain, unusual or complex cases, annotators need to make judgment calls. It is this quality of perception of gray zones that makes annotation an art.

Domain Expertise

The subject-matter knowledge of annotators is needed in special areas of healthcare, finance and law. Proper labelling of a medical scan or legal document will require more than just general guidelines.

Such human factors are necessary to make datasets look like reality, and not built on naive assumptions.

The reason Data Annotation Has a Direct Effect on AI Performance.

The quality of annotated data defines the extent to which an AI model would work in the real world.

Annotation of high-quality results in:

- Accuracy and precision are improved.

- Reduced bias and errors

- Better performance in edge cases.

- More reliability and credibility.

Lack of proper annotation, however, will either lead to the models misinterpreting data, making unsafe decisions, or localized results. The quality of annotation may have a direct relationship with safety in extreme systems such as healthcare diagnostics or autonomous driving.

Essays of Automation in up-to-date Annotation.

In order to manage the increasing data volumes, numerous processes have AI-supported annotation tools. Such systems are able to pre-label data or propose bounding boxes as well as likely entities in text.

Nevertheless, automation is more effective when it is accompanied by human control. Having human-in-the-loop systems enables the annotators to look through, fix and refine the machine-generated labels to combine speed and precision.

This partnership emphasizes the way art and science of annotation merge, technology offers a larger scale, and human beings are an interpretation.

Building Better AI Through Better Annotation

Organizations that invest in AI tend to highly emphasize on model architecture and algorithms. The most sophisticated models are unable to compensate with poorly labelled data, though.

To construct improved AI systems, it will need:

- Making investments in skilled annotators.

- Formulating powerful guidelines and training programs.

- Introduction of strict quality control measures.

- Taking advantages of scalability and collaboration tools.

Annotating data is not a preliminary activity, it is an intelligent activity, and it determines the intelligence of the finished system.

Final Thoughts

The creation of smart AI systems involves an enormous amount of work done to make data intelligible. It is simple to say that data annotation is an art and science because it integrates a structured approach with a human one.

The quality of annotation will only become more significant as AI keeps developing and many more spheres of our lives will be involved. The ones who will be creating AI systems that are not only potent, but reliable and useful will be the organizations which acknowledge this and invest in it.

Contact Infosearch for your annotation services.

Recent Comments